I have been spending some time putting into practice the ideas that I’ve been developing with Prof John Gero around the role of interpretation in computational creativity, in the domain of Python generated MIDI music. This post shows how to transpose MIDI with python as well as setting the scene for more research to follow.

There is a great tutorial from the Deep Learning team on how to train a system to produce music from examples – however, the examples from the code in the tutorial are fairly awful musically, as the system is learning on songs written in many different keys (the examples in the zip file at the bottom of the page with improvements are far more interesting musically).

It is beneficial to train the system with all files in the same key so that they create harmonies rather than discordance (as they suggest on that linked page – check the difference between the files with and without this).

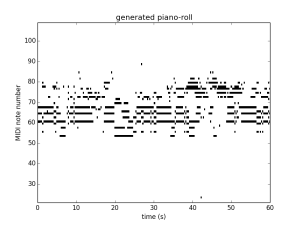

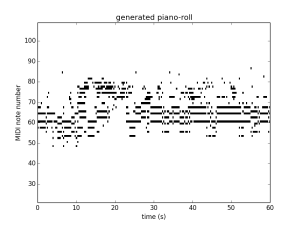

There is a script below to use Python to transpose MIDI files into C major. Compare the two samples on the tutorial page with these two following transposition into a standard key: sample1 and sample2

The piano rolls for these are respectively:

The point of this post is to share this script for anyone following on in wanting to transpose MIDI files using python (script below) as well as to set the scene for more research to follow in this domain.

Having replicated what the DeepLearning people have done, the challenge is to:

1) set up a limited conceptual space within which the system generates; and

2) have it go through a phase of interpretation (currently not in the algorithm at all) where it can change this conceptual space

The reason is that my work has suggested that generation occurs within a limited conceptual space, whereas interpretation uses the breadth of experience. This domain of music is useful for demonstrating this – I will post more examples of python midi music as it gets developed.

12 replies on “Transpose midi with python for computational creativity in the domain of music”

Just what I needed and didn’t have to code myself. Thanks.

Your code seems to lack sharp keys, I fixed it in my machine with:

majors = dict([(“A-“, 4),(“G#”, 4),(“A”, 3),(“A#”, 2),(“B-“, 2),(“B”, 1),(“C”, 0),(“C#”, -1),(“D-“, -1),(“D”, -2),(“D#”, -3),(“E-“, -3),(“E”, -4),(“F”, -5),(“F#”, 6),(“G-“, 6),(“G”, 5)])

minors = dict([(“G#”, 1), (“A-“, 1),(“A”, 0),(“A#”, -1),(“B-“, -1),(“B”, -2),(“C”, -3),(“C#”, -4),(“D-“, -4),(“D”, -5),(“D#”, 6),(“E-“, 6),(“E”, 5),(“F”, 4),(“F#”, 3),(“G-“, 3),(“G”, 2)])

Thanks Hannu, great to see improvements. If your code is online somewhere, feel free to post a link here in the comments for others that are following.

Nick

Hey there,

I wonder did you alter the program or just keep it same as the original (i.e. 200 loops for both training & testing, 150 RBM nodes and 100 RNN nodes) ?

Cheers mate!

Hi Elliot, yes, I did just leave it with the default settings for training and testing – I didn’t get too deep into tweaking the network, it was just as a proof of concept.

Best,

Nick

Hello, I have used this code to transpose some MIDI files to C major/A minor. However, I have noticed that it changes the timing of the notes. Any suggestions on how to fix this? Thanks

Hi Niamh, I’m surprised that this happens as it should just be changing the pitch. I’m sorry but I’m not maintaining the code, so you’re kind of on your own here.

Best,

Nick

Hello,

Is there a way to use music21 to transpose a chord in minor to major and vice-versa?

Thanks…….

440Hz.

This is amasing!

Thank you so much! <3

Does anyopne have any experience runnign this in Python3? I have a project that requires a large amount of 8-16 bar single channel MIDI files converting to C.

Sadly my complete inexperience with Python has only allowed me to make one file before I hit (Pdb). Any help would be greatly appreciated. Happy to make a contribution for anyone’s time too. All the best! Peter

It should be relatively easy to “port” to python3, from a quick look the print statements are the only thing that need adjusting – python3 requires brackets around the arguments to print ie print(“hello world”) instead of print “hello world”. There’s also an automated conversion tool https://docs.python.org/3/library/2to3.html

majors = dict([(“A-“, 4),(“G#”, 4),(“A”, 3),(“A#”, 2),(“B-“, 2),(“B”, 1),(“C”, 0),(“C#”, -1),(“D-“, -1),(“D”, -2),(“D#”, -3),(“E-“, -3),(“E”, -4),(“F”, -5),(“F#”, 6),(“G-“, 6),(“G”, 5)])

minors = dict([(“G#”, 1), (“A-“, 1),(“A”, 0),(“A#”, -1),(“B-“, -1),(“B”, -2),(“C”, -3),(“C#”, -4),(“D-“, -4),(“D”, -5),(“D#”, 6),(“E-“, 6),(“E”, 5),(“F”, 4),(“F#”, 3),(“G-“, 3),(“G”, 2)])

From where can I find these values for any key? e.g. if I want to transpose to A major.